2025 AI Model Recap

How AI foundation model innovation went exponential in 2025 and why 2026 is going to be even wilder

I’ve been rather occupied with new market launches for Partis clients to write much recently. But before the year closes, I wanted to cap it with something that’s been a big part of 2025. My year in start-up land; building software with a little (or a lot of) help from AI. A sexy topic indeed.

Two years ago, this would have been a short post. OpenAI largely set the pace, most people used the same default models, and the conversation was fairly settled. Over the last twelve months, that changed in a way that’s hard to overstate.

The AI race is now properly on.

It’s neck and neck across multiple fronts. US and China pushing in parallel, leaving Europe in the dust. Open source and closed source advancing side by side. Google, Anthropic, xAI and OpenAI all shipping at a relentless pace. Plenty of hype, but plenty of substantiative releases that materially changed what these systems, and mankind could do.

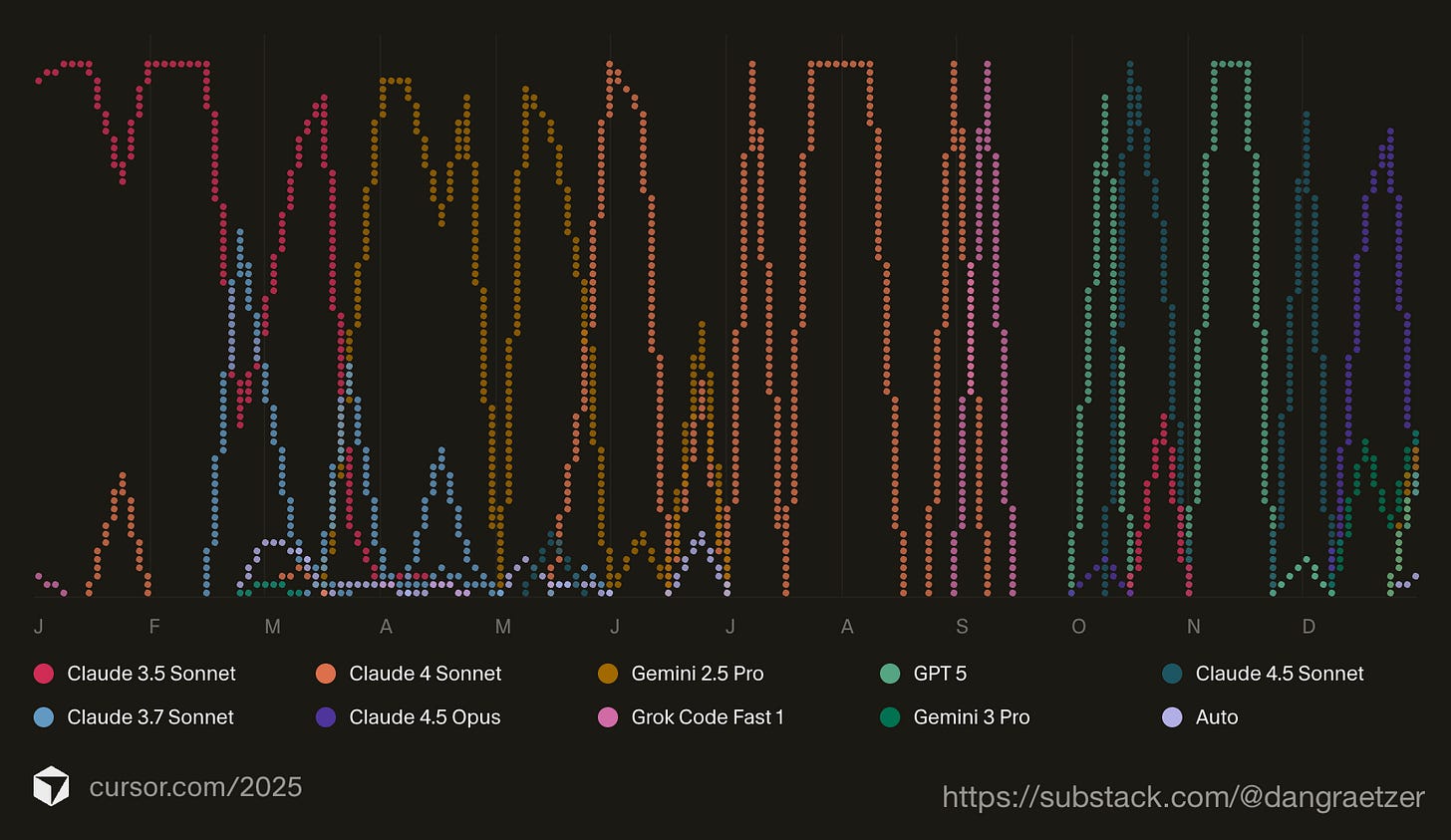

Looking back at my Cursor usage over the year, the pattern is hard to follow. Models leapfrogged each other, one after the next. And not in one narrow area, but across text, reasoning, vision, audio, and multimodal interaction at the same time. That breadth is what made this year feel different.

Google’s comeback is the clearest example. For a long time, Google’s AI story lived more in research papers than in daily use - remember the George Washington scandal? Then the Gemini line started to sharpen. Gemini 2.5 hinted at what was coming. Gemini 3 blew everyone’s minds. The jump was substantial enough that people who had mentally discounted Google found themselves genuinely impressed, also let’s not forget that Demis Hassabis won a Nobel prize! (and watch The Thinking Game if you haven’t yet)

Anthropic followed a steadier arc, but one that built real confidence. Each release felt more composed, more capable of holding complexity without losing the thread. Claude 4.5 Opus stands out here. It handles long contexts and layered questions with a calmness that’s rare in fast-moving systems. If you’re a developer and haven’t tried it yet, just give it a go.

OpenAI - despite an overhyped ChatGPT5 release - has pushed hard on depth. The pro models have become increasingly comfortable with dense, messy problems. Long reasoning chains, competing constraints, ambiguous inputs. Their models reason meticulously through the problem without rushing to answer. IMO, they are also the clear leaders in User Experience, and make AI more accessible than any other vendor.

xAI adds another dimension. Insane amounts of compute, fast iteration, unique and bold, and a willingness to move aggressively has raised the temperature, and lowered the guardrails of the whole market.

One of the most under-appreciated shifts this year has been open source. Running models like Qwen locally, experimenting with DeepSeek, and spending time with Gemma 3 has been genuinely eye-opening, not to mention fun!

The pace of Chinese open source innovation in particular is hard to ignore. These models aren’t interesting because they’re free or local, but because they’re strong.

Capable reasoning, solid multilingual performance, and rapid iteration without the friction of big product launches. It’s a reminder that the frontier isn’t confined to a handful of US labs. It’s a serious force in where this is all heading.

What ties all of this together is the pace. Innovation in foundation models this year has been exponential. Not one breakthrough dragging everything else along, but many advances arriving in parallel. When that happens, expectations reset quickly.

This isn’t limited to text. Vision models crossed into genuinely useful territory. Audio models became practical. Multimodal systems started to feel natural rather than stitched together. The gap between input types narrowed fast, and that has consequences far beyond demos.

All of this happened inside a single year.

When a technology is nearing maturity, patterns stabilise. Interfaces settle. Behaviour converges. But that’s not what we’re seeing. Everything is still fluid. People are discovering new ways to use these systems almost weekly. Products are being rethought mid-build.

That’s why I’m so optimistic. Not because one model will finally dominate, but because the curve still looks steep.

If this is what a year of compounding progress looks like, I’m genuinely pumped to see what we get in 2026.

Meanwhile, Happy Channukah, Merry Christmas, and have wonderful break with your families.